The Hidden Threat of Click Farms in Market Research

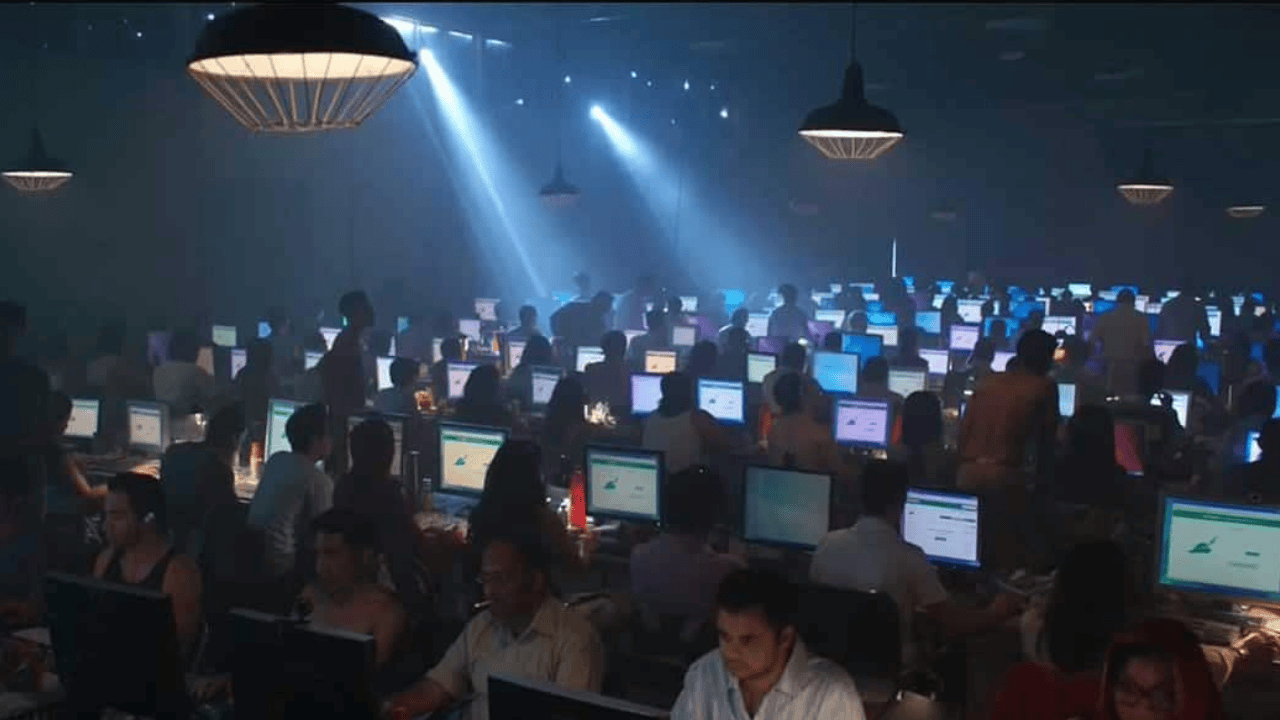

Click farms represent one of the most sophisticated and damaging forms of survey fraud today. These operations employ dozens or hundreds of low-paid workers operating from single locations—often warehouses filled with phones, tablets, and computers—to generate fake survey responses at industrial scale. Unlike simple bots, click farms produce seemingly human-like data that passes basic quality checks, contaminating up to 30% of online survey datasets in high-risk markets.

What makes click farms particularly dangerous? They combine human intelligence with automation. Workers solve CAPTCHAs, pass attention checks, and provide coherent open-ended responses, while scripts handle high-volume submissions. The result: researchers analyze "data" from hundreds of identical devices producing patterned, low-quality insights that drive flawed business decisions and waste thousands in sample costs.

Red Flags: Traffic Patterns That Scream Click Farm

Sudden Volume Spikes from Single Locations

The most obvious indicator is unnatural completion rate surges. Legitimate surveys show steady accrual; click farms deliver 50-200 completions within hours from concentrated geographies. Look for geographic clusters where 20%+ of responses originate from cities representing <1% of your target market.

High CTR, Zero Conversions

Click farms excel at starting surveys but rarely complete complex branches. Expect click-through rates >5% with completion rates <20%, especially on multi-stage screeners. Real users drop off gradually; farms show sharp cliffs after initial incentives.

Uniform Session Characteristics

Click farm workers follow scripts, creating identical behaviors: session durations clustering around 3-7 minutes, identical drop-off points, and repetitive answer patterns across demographic questions. Natural variance disappears—responses cluster tightly around means rather than showing bell-curve distributions.

Device and Technical Signatures

Device Farm Fingerprints

Modern click farms use device pools with identical hardware signatures. Browser fingerprinting reveals multiple "unique" users sharing screen resolutions (e.g., 50 responses from 1920x1080), identical fonts, and matching hardware specs. Legitimate mobile users show diverse Android/iOS versions; farms cluster on outdated OS builds optimized for speed.

IP Concentration Despite Geo-Spread

Sophisticated farms rotate residential proxies, but patterns emerge: ASN clustering (same ISP blocks), rapid IP switching within sessions, or impossible geolocation jumps (Manila to New York in 2 minutes). Cross-reference with WHOIS data—legitimate residential IPs rarely share carrier-grade infrastructure.

User-Agent Anomalies

Farms favor headless browsers and automation-friendly agents. Watch for disproportionate Chrome on Android from desktop resolutions or Safari versions years out of support. Real users maintain OS-browser consistency; farms mix mismatched configurations.

Behavioral Patterns That Betray Human Farms

Cognitive Uniformity

Click farm workers lack genuine engagement, producing patterned responses. Straight-lining appears across matrix questions, open-ended answers recycle 3-5 templated phrases, and demographic inconsistencies abound (25-year-olds claiming 30 years C-suite experience). NLP semantic clustering reveals unnatural text similarity >85% within small respondent groups.

Impossible Timing Patterns

Real humans pause to read; farms blitz surveys. Flag completions <2 minutes for 20+ questions or response times clustering within 1-3 seconds across items. Mouse movement analysis (if available) shows linear paths vs. human hesitations and corrections.

Weekend/Off-Hours Concentration

Legitimate panels distribute evenly; farms spike during target-country off-hours when workers operate cheaply. Cross-reference timestamps against local time zones—genuine US respondents rarely complete at 3 AM EST.

Advanced Detection Techniques

Machine Learning Anomaly Detection

Train models on historical clean data to flag outliers. Ensemble methods combining response patterns, device signals, and timing achieve 87% accuracy against hybrid farms, outperforming single-metric rules. CloudResearch's Fraud Detection Tool automates baseline comparisons across projects.

Graph Analysis of Relationships

Map respondent connections via overlapping IPs, devices, and answer patterns. Click farms form dense clusters; legitimate data shows sparse networks. Gephi visualization reveals "farm nodes" dominating subgraphs.

Cross-Platform Validation

Compare survey data against CRM records, email lists, or loyalty programs. Farms fail loyalty checks (no prior engagement) and produce demographic distributions defying census data (e.g., 80% males in female-targeted studies).

Real-World Case Study: The 500-Device Warehouse

Blanc Research uncovered a Philippine click farm generating 2,500 responses for a CPG brand study. Initial data looked clean—diverse IPs, passed attention checks—but graph analysis revealed 78% responses linked via 23 ASNs. Device fingerprinting exposed 156 identical Chrome/Android configs. Response clustering showed 12 templated open-ended answers repeated 40+ times each.

Post-cleaning, brand awareness dropped 18%, revealing true competitive threats buried under farm noise. The client avoided $750K in misguided ad spend, redirecting to validated channels. Total savings: $42K in wasted incentives plus avoided opportunity costs.

Prevention: Building Click Farm-Resistant Studies

Pre-Launch Hardening

-

Require video/audio verification for high-value incentives

-

Use dynamic pricing—higher payouts trigger stricter checks

-

Implement invisible behavioral biometrics (keystroke dynamics, mouse entropy)

-

Partner only with vetted panels maintaining device reputation databases

Real-Time Monitoring Dashboards

Deploy live alerts for volume spikes, geo-clusters, and pattern anomalies. Auto-pause surveys hitting risk thresholds. Qualtrics and SurveyMonkey enterprise offer built-in fraud engines scoring responses continuously.

Post-Collection Deep Cleaning

Always run multi-signal validation:

Risk Score = 0.3×IP_Rep + 0.25×Device_Cluster + 0.2×Timing_Anomaly + 0.15×Pattern_Similarity + 0.1×Text_Entropy

Threshold >0.7 triggers exclusion.

The Business Cost of Missing Click Farms

Industry estimates peg survey fraud losses at $1.2B annually, with click farms claiming 40%. Cint reports researchers rerunning 25% of studies due to undetected contamination. Agencies lose client trust when insights prove unreliable. The fix? Layered defenses catching 90%+ of farm activity through signal fusion.

Conclusion: Hunt Click Farms Proactively

Click farms thrive on researcher complacency. Basic checks fail against hybrid human-bot operations scaling fake engagement profitably. Success demands vigilance: monitor patterns, fingerprint devices, analyze behaviors, and validate continuously.

Implement these techniques across your pipeline. The alternative—decisions built on warehouse fiction—isn't just risky; it's irresponsible. Clean data isn't a luxury; it's your competitive edge.